Still in the wonderful city of Chicago. In my last blog I highlighted that some enterprises will encounter the need to develop their own MDM system rather than purchasing one, and that there are a number of patterns that could be used as a starting point.

Once a MDM system is available we now need other applications to make use of it. This is where MDM Aware consuming applications comes into play. MDM aware applications are prepared to:

- Use outside Data Quality Processes for Data Entry Verification

- Pull Key Data Elements from an Outside Master Data Source

- Push its Own Data to External Master Data management Systems

In effect, MDM-Aware applications have the ability to synchronize data to MDM

Hubs, fetch master data from MDM Hubs, and utilize MDM data quality processes at

all data capture points.

Making an existing operational system MDM-Aware entails changing its behavior

such that it can operate with the master data sitting outside of its database, without impacting support of its core processes and functions.

With the various MDM requirements, MDM patterns, and information acquisition techniques available, there is no single way to architect MDM. For illustrative purposes the rest of this blog will focus on an example scenario highlighting information acquisition mechanisms, components, and interactions points, while utilizing a distributed system of entry approach. These are by no means the only interactions, but highlight the number of options and flexibility that a MDM solution can offer.

Areas of Responsibility

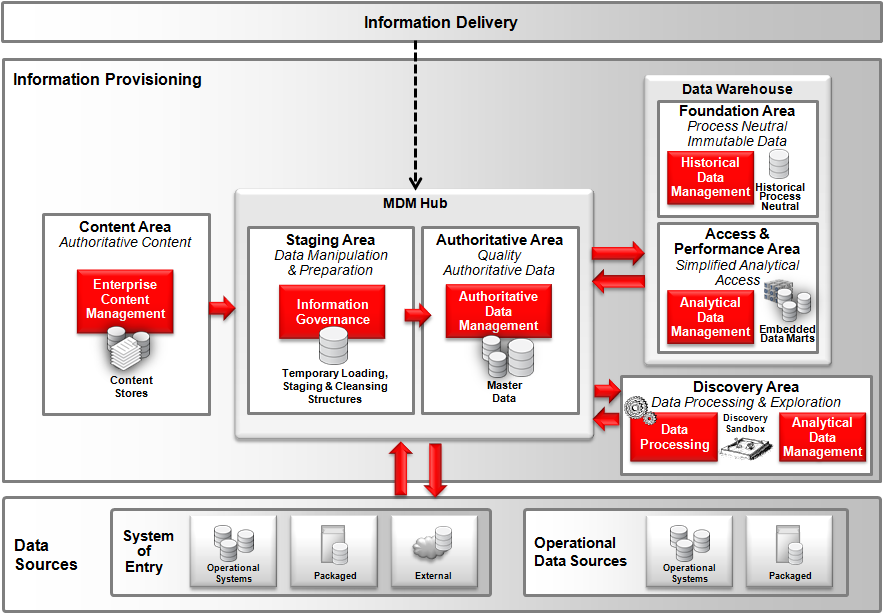

This graphic illustrates the ingestion points between various areas of responsibility within the information provisioning layer. The MDM Hub is composed of two key areas of responsibilities. The Authoritative Area manages the master and key reference entities, which closely interacts with the staging area for the cleansing of ingested data. This ingested data can originate from a number of areas, including operational data sources, Data Warehouse, and the discovery area. In turn, the MDM Hub is also a provider of master data to a number of areas.

Interactions

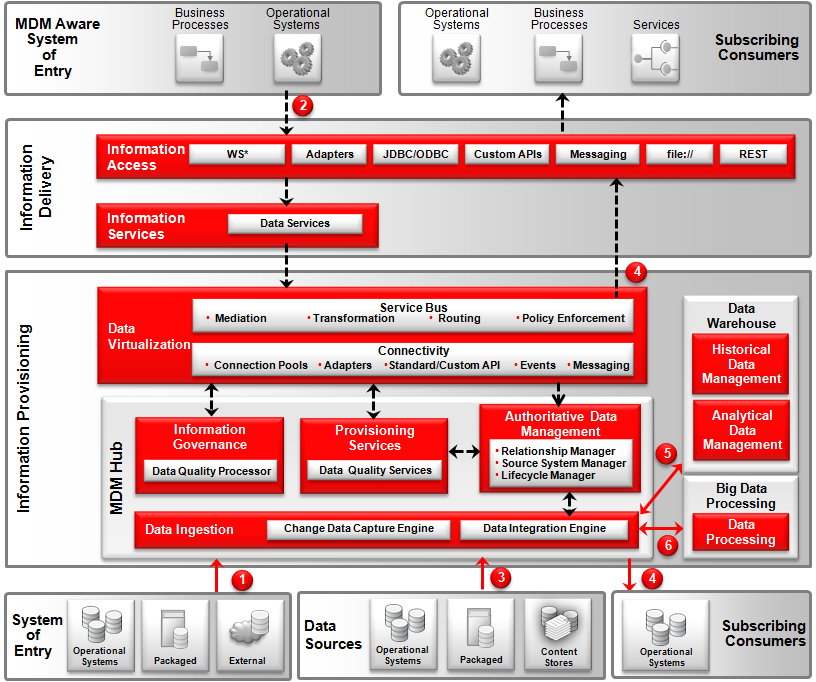

In addition to ingestion mechanisms the following graphics highlights MDM making effective use of service oriented architecture to propagate and expose the master data to the interested applications. Without utilizing a service-oriented approach to MDM there is a danger that MDM creates quality master data that becomes its own data silo. In effect SOA and MDM need each other if the full potential of their respective capabilities are to be realized.

- Sometimes it is not feasible to update existing operational systems to be MDM-aware. This scenario can occur when application source code is not available, proprietary legacy applications do not allow changes in the interfaces, or when there is a plan to decommission a system soon and therefore system’s enhancements are not considered worthwhile. In this case operational systems that are utilized as a system of entry act as passive participating applications where data changes in these systems are detected and pushed to the MDM hub for cleansing, de-duplication and enrichment. This is in effect performing a synchronization of authoritative data.It’s important to be able to dynamically synchronize data into and out of the hub. The synchronization doesn’t have to be real-time, although there are benefits in doing so. Since the whole point is to build a “Single Source of Truth” for a particular entity like customers or products, having out-of-date information in the hub, or not synchronizing the data quality improvements back to the original source systems, can defeat the whole purpose of the project.This synchronization can utilize many different approaches such as asynchronous or batch. This non-intrusive approach in the architecture can lead to quicker MDM implementations. As data flows from the operational system into the MDM hub it is transformed into the common authoritative data model. The MDM hub makes full use of the data quality processor via the appropriate Provisioning Services. This loose coupling approach to invoking data quality processes allows the MDM hub to utilize a number of different data quality processors depending on the entity in question, as well as to aid in the easy replacement of a data quality processor, if the need should arise in the future. The MDM hub consolidates, cleanses and enriches, de-duplicates and builds a golden record.

- Where it is feasible to update existing operational systems to be made MDM-aware, a synchronous pull approach is utilized at the time of data entry. The operational system makes an authoritative data service query request for a list of records that match a specified selection criterion. The MDM hub searches its master index and returns the list of records. Armed with these records the operational system enables the end user to peruse and select the appropriate authoritative record. Once selected, the operational system makes another data service request to the MDM hub – this time to fetch the attributes for the selected record in question.These match and fetch data service requests are synchronous and enable a real-time interaction between the operational system and the MDM hub. Through these data service requests, consuming applications have on-demand access to the single source of truth for authoritative data, thus preventing duplicate and inaccurate data entry. Once the operational system has created a new record, and/or updated an existing record, the authoritative record needs to be synchronized with the MDM hub in the same manner as highlighted in bullet point 1 above.

- No matter what MDM hub pattern is used, an initial load of authoritative data are required from the source systems into the MDM hub to build the initial golden records. Due to the volume of data this tends to be performed by a bulk data ingestion mechanism. From time to time delta loads may be necessary. This load can contain both structured and unstructured data. If the unstructured data are already contained within a content store with adequate security and versioning support, it is best to leave the unstructured content where it is and load the associated content store reference key into the MDM hub.

- The MDM Hub’s source system manager ensures that a central cross-reference is maintained enabling the MDM hub to publish new and updated records to all participating subscribing information consumers. The data are transformed from the common authoritative data model to the format needed by target systems.Before publishing the data, the MDM hub enforces any associated security rules making sure that only data that is allowed to be shared is published. A key benefit of a master data management solution is not only to clean the data, but to also share the data back to the source systems as well as other systems that need the information. It is preferable for the MDM hub to feedback the golden record back to the MDM source systems. Otherwise the result is a never-ending cycle of data errors. Therefore the source systems that initially loaded the data, or systems that took part of a delta load, may also be subscribing information consumers.An internal triggering mechanism creates and deploys change information to all subscribing consumers using a variety of mechanisms such as bulk movement and messaging. All changes to the master data in the MDM Hub can also be exposed to an event processing engine. The hub automatically triggers events and delivers predefined XML payload packages appropriate for the event. This enables the real time enterprise and keeps master data in sync with all constituents across the IT landscape.

- The MDM hub holds accurate, authoritative, governed dimension data, the actual operational data cross-reference, and the hierarchy information on all key master data entities. With all the master data in the MDM Hubs, only one pipe is needed into historical/analytical data management systems (such as a Data Warehouse) to provide key dimensions such as Customer, Supplier, Account, Site, and Product.

- The MDM hub holds accurate, authoritative, and governed data that can give contextual information to data processing activities. For example, authoritative data such as master IDs which can enrich event processing in identifying, aggregating, and publishing events. In addition, external data sets such as social media and government data coupled with insights achieved through big data processing can embellish data held within a MDM hub. For example, utilizing identity resolution mechanisms the MDM Hub will be able to link new sources of external data such as social metrics with key master data such as customers. This will enable insights such as customer and product sentiment, identifying previously unknown relationships, and organization hierarchy.

Phew, that is more than enough on MDM.

Good luck, Now Go Architect…